Importance of Sound Design in Games

One of the biggest misconceptions in game development is that sound design is something you "add at the end." In reality, experienced teams know something very different: sound is not a layer. It is a system that directly interacts with gameplay, psychology, and performance.

You can ship a visually polished game with average audio, but it will feel flat, unresponsive, and forgettable. On the other hand, even relatively simple visuals can feel premium when backed by strong, well-integrated sound design.

This is especially true in high-frequency interaction environments like mobile games and slot games, where players rely heavily on audio feedback loops to interpret outcomes and stay engaged.

The studios that understand this do not treat sound as decoration. They treat it as a core design and retention tool.

Industry Context: Why Audio Is Gaining Strategic Importance

As games evolve into live-service ecosystems, the role of sound has expanded beyond immersion. Modern game design is increasingly focused on:

- Short session loops

- Instant feedback

- Emotional reinforcement

- Behavioral retention

Sound sits at the center of all four.

In mobile-first environments, players often interact with games in distracted contexts, commuting, multitasking, or playing without full visual attention. In these cases, sound becomes a primary feedback channel, not a secondary one.

In slot games, this dynamic is even more pronounced. The core gameplay loop is repetitive by design. What keeps it engaging is not just math or visuals, but the timing, layering, and escalation of audio cues.

This is why leading studios now involve sound designers much earlier in production, often alongside game designers and UI/UX teams. That same cross-discipline alignment is central to building polished, feedback-rich experiences across game development services.

What Sound Design Actually Does in a Game System

At a technical level, sound design is about more than creating assets. It is about defining how audio responds to game states, player inputs, and system events.

Every meaningful action in a game typically triggers an audio event, whether it is a button press, a collision, or a reward. These events are not static. They are often parameter-driven and context-sensitive, for example.

🔹 A slot game win sound is rarely a single file—it’s usually a layered system:

- Base audio plays immediately

- Additional layers are triggered based on win size

- Music intensity increases dynamically

- Timing adjusts based on animation duration

🔹 This kind of implementation requires coordination between:

- Game designers, who define triggers

- Developers, who implement logic

- Audio designers, who create variation and layering

Without this integration, sound becomes repetitive and loses its psychological impact.

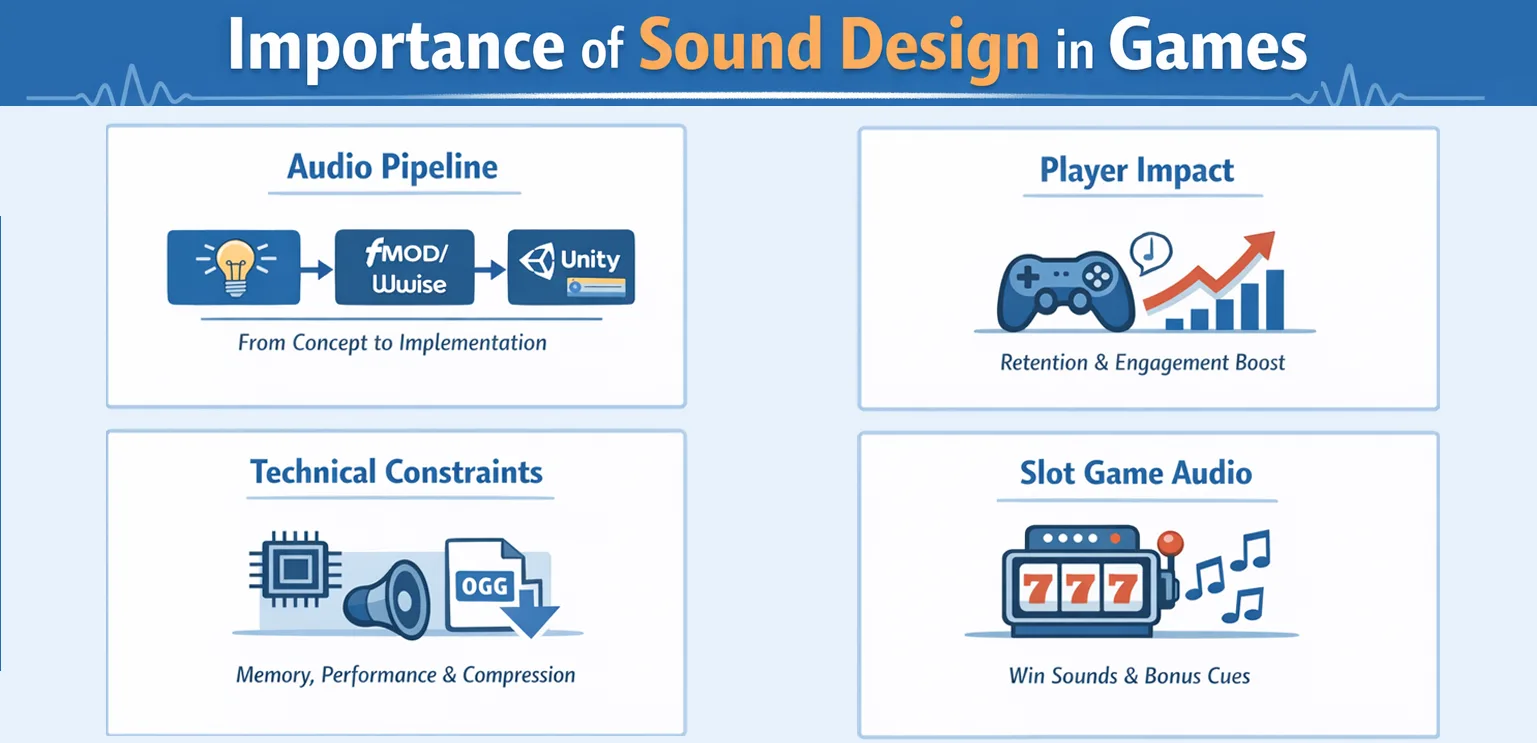

The Real Production Pipeline of Game Audio

In professional game development, sound design follows a structured pipeline that mirrors other disciplines like art and programming.

It typically starts during pre-production, where the team defines an audio style guide. This includes tone, genre, emotional direction, and references. At this stage, decisions like arcade-style versus cinematic audio or minimalist versus layered feedback are made.

During production, audio assets are created in parallel with gameplay systems. However, the key difference from art is that audio is rarely implemented as static assets. Instead, it is integrated through middleware such as FMOD or Wwise, which allows dynamic control over playback.

🔹 These tools enable developers to:

- Trigger sounds based on events

- Adjust parameters like pitch and volume in real time

- Create adaptive music systems

- Manage transitions between audio states

In Unity-based pipelines, audio events are often connected through scripts or event systems, allowing tight synchronization with gameplay logic. Teams building Unity sound design workflows often depend on this event-driven structure to keep audio tightly coupled to interaction timing.

Testing and iteration play a huge role. Unlike visuals, audio perception is highly subjective. Teams frequently adjust timing, layering, and intensity based on play tests. A sound that feels exciting in isolation may feel overwhelming or repetitive during extended sessions.

Sound Design in Slot Games: A System Built on Timing and Psychology

Slot games represent one of the most refined uses of sound design in the industry. Unlike narrative or action games, slots rely on micro-interactions repeated hundreds of times per session. This makes timing and variation absolutely critical.

The most important audio system in a slot game is the reward escalation curve.

🔹 When a player spins the reels, the audio system typically follows a structured sequence:

- A neutral or anticipatory spin sound

- Subtle cues as reels slow down

- A small confirmation sound for regular outcomes

- A layered, escalating sequence for wins

What differentiates high-performing slot games is not the presence of these elements, but how they are timed and combined.

For example, near-miss scenarios often use slightly altered audio cues to build tension without delivering a reward. Bonus triggers introduce entirely different soundscapes to signal a shift in gameplay mode. Large wins extend audio sequences to prolong emotional engagement.

Studios working on slot production pipelines, including teams like Gamix Labs, often align animation timing and sound triggers very closely to ensure that visual and audio feedback feel like a single unified system rather than separate layers.

Technical Constraints: The Reality Most Teams Underestimate

One of the biggest gaps in many sound design discussions is the lack of focus on technical constraints. Audio is not free. It impacts:

- Memory usage

- Build size

- CPU performance

This is especially critical for mobile and instant-playable games.

Large uncompressed audio files can significantly increase build size, affecting load times and user acquisition funnels. To manage this, teams use compression formats like AAC or OGG, balancing quality and size.

Memory management is another challenge. Loading too many audio assets simultaneously can lead to performance issues, especially on low-end devices. This is why many teams implement audio pooling and streaming strategies, ensuring that only necessary sounds are loaded at any given time.

Latency is also a key factor. Poorly optimized audio can introduce delays between player actions and sound feedback, breaking the sense of responsiveness. Experienced teams design audio systems with these constraints in mind from the start, not as an afterthought.

Measuring the Impact: How Sound Affects Real Game Metrics

While sound design is often discussed qualitatively, its impact can be measured.

🔹 In mobile and slot games, well-implemented audio systems have been observed to:

- Increase session duration by improving flow and engagement

- Improve retention by reinforcing reward loops

- Reduce perceived repetition through variation

- Enhance conversion during key monetization moments

For example, extending audio sequences during large wins can increase the perceived value of rewards, even when the actual payout remains the same. Similarly, clear and satisfying UI sounds can improve onboarding by helping players understand interactions without relying on tutorials. These effects are subtle but cumulative, and over millions of sessions, they become significant.

Where Most Sound Design Fails And Why

In practice, most games do not fail because they lack sound. They fail because sound is poorly integrated.

A common issue is misalignment between audio and gameplay timing. If a sound triggers too early or too late, it creates a disconnect that players may not consciously notice, but will feel.

Another frequent problem is over-design. Teams sometimes add too many layers or overly complex audio systems, leading to fatigue during longer sessions. This is particularly problematic in slot games, where repetition is inherent.

There is also a tendency to prioritize polish over clarity. Highly produced audio can sound impressive, but if it does not clearly communicate game states, it loses functional value.

Best Practices for Modern Game Audio Systems

The most effective approach is to treat sound design as part of system design, not just asset creation.

Start by defining audio alongside gameplay mechanics. Every core interaction should have a clear audio purpose, whether it is feedback, reinforcement, or emotional signaling.

Use variation intelligently. Instead of creating hundreds of unique sounds, design systems that modify a smaller set of assets through pitch, timing, and layering.

🔹 Test in real conditions. Mobile games should be tested:

- With and without headphones

- At low volume

- In noisy environments

This ensures that critical feedback remains effective in real-world usage.

Finally, align audio with other disciplines. Sound should not be developed in isolation. It should evolve alongside animation, UI, and gameplay systems to create a cohesive experience.

Future Trends: Where Game Sound Design Is Heading

Sound design is becoming more dynamic and system-driven. Adaptive audio systems are allowing games to respond in real time to player behavior, creating more personalized experiences. AI tools are beginning to assist in generating variations and speeding up production workflows.

Spatial audio is gaining traction, particularly in immersive and VR environments, but even mobile games are starting to experiment with directional sound cues. Perhaps the most important trend is the shift toward audio as a retention tool, not just an aesthetic one. As competition increases, studios are investing more in sound design because they recognize its direct impact on engagement and monetization.

Conclusion

Sound design is one of the few disciplines in game development that touches both emotion and functionality simultaneously. It shapes how players feel, how they interpret actions, and how long they stay engaged. For studios, this means sound is not just about quality. It is about integration, timing, and system design.

The difference between an average game and a memorable one often comes down to details that players do not consciously notice, but continuously experience. Sound is one of those details.

FAQs

Why is sound design important in games?

Sound design provides feedback, enhances immersion, and reinforces player actions, making gameplay feel responsive and engaging.

What tools are used for game sound design?

Common tools include FMOD and Wwise, which allow dynamic audio implementation and real-time control over sound behavior.

How does sound design impact player retention?

It reinforces reward systems, creates emotional engagement, and improves gameplay clarity, all of which contribute to longer sessions and repeat play.

What is adaptive audio in games?

Adaptive audio changes dynamically based on gameplay events, player actions, or game states, creating a more immersive experience.

How do you optimize audio for mobile games?

By using compressed formats, managing memory efficiently, reducing latency, and ensuring clarity across different devices and environments.

When should sound design be implemented in development?

Ideally during early production, alongside gameplay and UI design, to ensure proper integration and effectiveness.