The Rise of AI-Composed Game Music: Tools, Trends & What’s Next

For years, music in games was treated as a fixed asset—composed, exported, and looped. Today, that model is being replaced by something far more dynamic: intelligent, system-driven audio powered by AI. This change is not just about efficiency. It’s about how music behaves inside a game.

Instead of asking composers to deliver dozens of variations manually, studios are now exploring ways to generate, adapt, and scale music in real time. For teams working on mobile titles, live-service games, and slot experiences, this is becoming increasingly relevant.

The real transformation is this: Music is no longer just created—it’s generated, controlled, and evolved as part of gameplay systems.

Industry Context: Why AI Music Is Becoming a Production Tool

The adoption of AI-composed music is driven by production realities rather than experimentation. Modern game pipelines demand:

- Rapid iteration

- Continuous content updates

- Scalable asset production

- Shorter release cycles

Traditional audio workflows struggle to keep up, especially when games require frequent updates or multiple variations of similar content.

AI music tools are stepping in to fill this gap—not by replacing composers, but by enabling teams to prototype faster, scale content, and reduce repetitive work.

This is particularly valuable in segments like slot games, where repetition is unavoidable and variation is critical to retention.

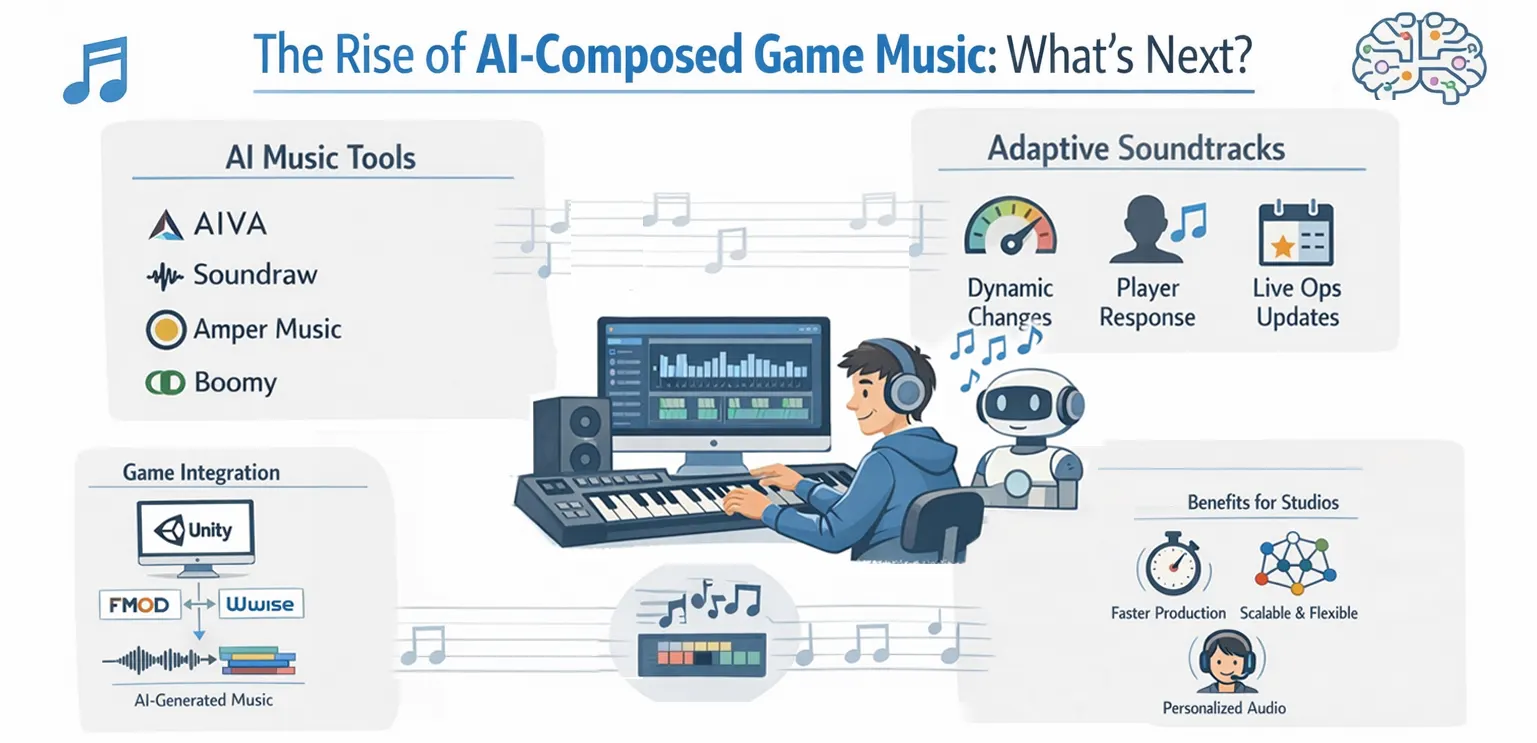

Leading AI Music Tools Used in Game Development

AI music is no longer theoretical. Several tools are already being used in production workflows, each serving slightly different purposes. For example:

🔹 AIVA

AIVA is widely used for generating cinematic-style compositions and structured musical pieces. It’s particularly useful when teams need a strong thematic base quickly.

🔹 Soundraw

Soundraw focuses on customization and variation. Developers can adjust mood, tempo, and structure, making it useful for creating multiple versions of a track without starting from scratch.

🔹 Amper Music

Amper Music (now part of Shutterstock) has historically been used for generating royalty-free music tailored to specific use cases, including games and media.

🔹 Boomy

Boomy allows rapid generation of music loops, which can be useful during prototyping or for lightweight mobile experiences.

More advanced workflows are beginning to integrate tools like OpenAI Jukebox for experimental or research-driven applications, although these are not yet standard in production pipelines.

The key takeaway is that studios are not relying on a single tool. Instead, they are combining these systems based on:

- Project scope

- Required quality level

- Production timelines

From Tracks to Systems: The Real Shift in Audio Design

The most important change AI introduces is not faster composition—it’s a shift in how music is structured.

Traditional workflows rely on switching between pre-made tracks. AI enables a more fluid approach, where music evolves continuously based on gameplay.

For example, instead of jumping from a “normal” track to a “bonus” track, a system might gradually introduce new layers, increase tempo, or modify instrumentation in response to player actions.

This creates a smoother, more immersive experience and reduces the jarring transitions that often break immersion.

In practice, this means music is no longer treated as a static file but as a set of controllable parameters—intensity, rhythm, layering—that can be adjusted in real time.

How AI Music Fits into Real Game Pipelines

Despite the hype, AI music does not replace existing audio systems. It integrates into them.

In most professional pipelines, AI-generated outputs are still routed through middleware like FMOD or Wwise. These systems handle event triggers, transitions, and parameter control.

The difference is that instead of feeding these systems with fixed audio files, teams can now feed them with:

- Generated loops

- Modular stems

- Multiple variations of the same theme

In a Unity-based workflow, a typical implementation might involve triggering an audio event tied to gameplay while passing parameters such as player progress or win intensity. Middleware then uses these parameters to adjust playback dynamically, sometimes combining AI-generated elements with pre-designed layers.

This hybrid approach allows studios to maintain control while benefiting from AI-driven scalability.

Use Cases Where AI Music Delivers Real Value

AI-composed music is not universally necessary, but it excels in specific scenarios.

In Live Ops environments, where games require frequent updates, AI tools allow teams to generate seasonal or event-based variations quickly. Instead of composing entirely new tracks, developers can create variations that maintain consistency while adding freshness.

In slot games, AI can help reduce repetition by introducing subtle variations in win sequences, background loops, or bonus features. Given how frequently players interact with these systems, even small variations can significantly improve perceived quality.

Studios working on production pipelines—particularly those managing both visuals and audio—are increasingly aligning animation timing with dynamic audio systems. In such setups, partners like Gamix Labs often ensure that visual feedback systems are designed in a way that can support adaptive audio layers, creating a more cohesive player experience.

AI is also highly effective during prototyping. Teams can generate placeholder music quickly, test gameplay pacing, and refine systems before committing to final compositions.

Technical Constraints: Why AI Doesn’t Remove Complexity

A common misconception is that AI simplifies audio production entirely. In reality, it shifts complexity rather than removing it.

Generated audio still needs to meet performance requirements. File sizes must be optimized, especially for mobile and instant-playable games. Compression techniques and streaming strategies remain critical.

There is also the challenge of consistency. AI-generated outputs can vary in quality and style, which means teams must implement validation and filtering processes to ensure alignment with the game’s audio identity.

Latency and synchronization are equally important. Dynamic systems must respond instantly to gameplay events, or they risk breaking immersion. This requires careful integration with game logic and testing across devices.

Measuring Impact: Does AI Music Improve Game Performance?

While AI music is still evolving, early implementations suggest measurable benefits when used correctly.

Games that introduce dynamic variation tend to see improvements in session length because the experience feels less repetitive. In slot environments, extended and adaptive win sequences can increase perceived reward value, even when the underlying math remains unchanged.

However, these gains depend heavily on implementation. Poorly designed systems can feel inconsistent or disjointed, which negatively impacts player experience.

This reinforces an important point: AI is not a shortcut to better audio—it’s a tool that amplifies the quality of your system design.

Where AI Still Falls Short

Despite its advantages, AI-generated music has limitations.

It often lacks the intentional storytelling and emotional nuance that experienced composers bring. While it can generate variations, it may struggle to create truly memorable themes.

There are also unresolved legal and licensing questions around training data and ownership, which studios must consider before adopting AI tools at scale.

For this reason, most successful implementations use AI as a support system, not a replacement.

Future Trends: What’s Coming Next

The next phase of AI in game music will focus on deeper integration.

We are likely to see more systems where music is generated in real time based on player behavior, creating highly personalized experiences. Adaptive soundtracks will become more granular, responding not just to game states but to subtle gameplay patterns.

Another key trend is cross-discipline integration. Audio systems will become more tightly connected with animation, UI, and gameplay mechanics, creating unified feedback systems rather than separate layers.

As tools mature, the barrier to entry will decrease, allowing smaller studios to experiment with dynamic audio systems that were previously resource-intensive.

Strategic Takeaway for Game Studios

The most important decision for studios is not whether to use AI music—but how to integrate it effectively. Teams that benefit the most are those that:

- Use AI for variation and scalability

- Maintain human control over creative direction

- Integrate AI into structured pipelines

AI should be seen as a production multiplier, not a creative replacement.

Conclusion

AI-composed game music represents a shift from static assets to dynamic systems.

It allows studios to scale audio production, reduce repetition, and create more responsive experiences. But its true value lies in how well it is integrated into gameplay and design systems.

The future of game audio is not purely human or purely AI-driven.

It is a hybrid model—where human creativity defines the vision, and AI helps bring it to life at scale.

FAQ: AI-Composed Game Music

What are the best AI tools for game music?

Popular tools include AIVA, Soundraw, Amper Music, and Boomy, each offering different strengths in composition, customization, and scalability.

Can AI-generated music be used in commercial games?

Yes, but developers must review licensing terms and ensure compliance with usage rights.

Does AI replace game composers?

No. AI supports composers by generating variations and speeding up workflows, but creative direction still relies on humans.

How is AI music implemented in games?

It is typically integrated through middleware like FMOD or Wwise, allowing dynamic control based on gameplay events.

Is AI music suitable for slot games?

Yes. It helps reduce repetition and create dynamic audio systems that improve engagement.

What is the biggest challenge with AI music?

Maintaining consistency and ensuring that generated content aligns with the game’s artistic vision.